Here we are, another week gone by and I hope another week of successful recordings! We ended last week talking about the beginnings of audio sculpting. We started with normalization and compression to amp the signal and accentuate some nuance in the recording. With this, we also discovered that the edits we made also accentuated some items we don’t want in our recording.

So, this week we’re going to look deeper into dealing with those unwanted audio artifacts. As a refresher, here’s the final normalized and compressed audio file from last week.

Notice the hiss in the beginning? If you listen closely you’ll notice it’s still there throughout the rest of the short recording as well. It’s evident enough that we really want to try and get that out of there. Fortunately we have a tool to do exactly that. The graphic equalizer!

At this point I’d like you to bring up a recording you’ve been working on so that you can follow along.

Now, what is equalization, and how can it solve the issue of all that hiss? The graphic equalizer is a tool to amplify or decrease the signal within specific frequency ranges. Most graphic equalizers have between 4 and 6 adjustable points at which the frequency can be modified. At each point the equalizer also provides adjustment based on a bell curve which allows for smooth saturation of the frequencies being modified.

Due to the freeware nature of the Audacity program, working with the EQ becomes a trial and error exercise. However it is doable. The main issue with the program is that it does not allow for live playback editing. This means that we have to make our edits based on knowledge (even if it’s slim) of where certain sounds will occupy the frequency range.

So where does everything land? Well it’s all linear and really most of it is common sense. Bass and kick drums exist in the lower frequencies. Generally between 60 to 1000hz. The mid range exists generally between 1000hz and 8000hz with the high frequencies between 8000 and 20000hz. For reference, a healthy human ear can register sounds from about 20 to 20000hz. As you age or are exposed to loud noises, the ears lose their ability to hear this full range of frequencies.

If bass exists in the low frequency range, then where does a guitar exist? The real answer to that is everywhere. A guitar has a wide tonal range that dips into the bass register and even some playability artifacts that exist up towards the upper range of our hearing. Knowing this, we can sculpt the instrument’s sound to meet our demands.

What about that hiss in my recording? Well that as well will have some frequency throughout our hearing range, but fortunately most of it will exist in the frequency ranges above 8000hz where most of the guitar’s meet of tone won’t be. Yes we’ll be dropping out some of the niceties like string slides and pick strikes, but in this circumstance, those artifacts can be sacrificed for the good of the recording.

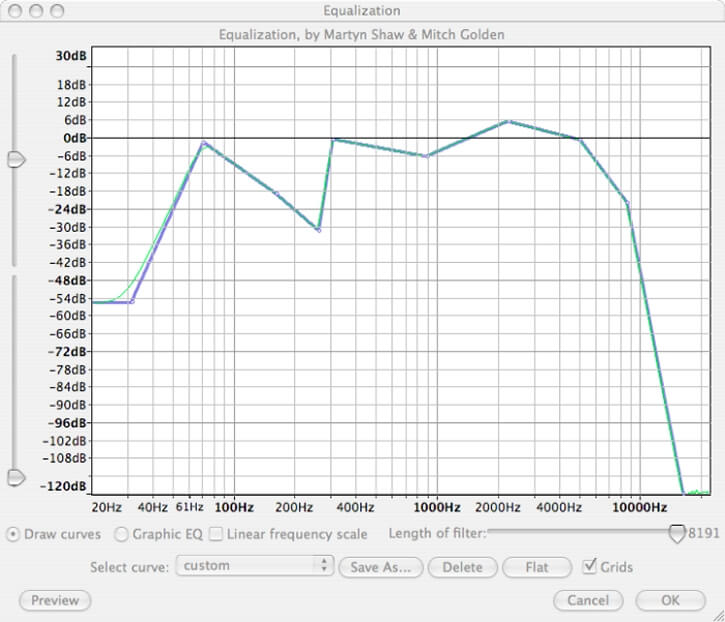

With your recording up navigate to the effect menu and select Equalization.

As you can see, I’ve dropped out the frequencies above 10000hz in order to combat all that hiss. It’s not perfect but already you can tell it’s subdued. You may also ask why I’ve dropped out frequencies elsewhere. This is for leveling purposes. You’ll notice if you just drop out the upper frequencies to get rid of that hiss, you create a really unbalanced sound. Kind of like hearing a party from the neighbors house. To balance this I’ve dropped out the frequencies that boom around 300hz as well as cutting off some frequencies below that which aren’t crucial to the guitar sound.

So it sounds better now, but what the heck? It got quiet! Well that’s easy to see. Just look at all that signal we decreased or removed. Fortunately, we can go back and normalize the signal, and if need be, add some more compression to get that signal back!

There we go, we’ve got some volume back after normalization and adding more compression. Be careful not to go too far with compression. The more you compress the more annunciation you lose. This isn’t as crucial with string instruments but can be so when talking about a vocal track.

So now we’ve got a file that although still far from ideal, is better than where we started and we’ve got less noise, and more of the good sound we want.

This brings me to a crucial point about recording. It’s far better to get the signal you want during the recording phase so that you’re not fighting to fix a problem (like hiss). So lets take a quick look as to why I got all that hiss from my recording.

When I recorded this progression through my internal microphone on my laptop I had the input volume at maximum. Yeah I wanted to capture as much guitar as possible, but because of that I also incorporated unwanted sound in the mix. Secondly I recorded the take in a loud room, with the heater running, traffic passing outside etc. The biggest reason however is that simply put, the microphone I used was never designed to be an instrument mic. Or a vocal mic for that matter. It’s a low level, on-board microphone with it’s main functionality designed to chat with people over the net.

This points to the importance of having good gear and a well designed studio setup. This doesn’t necessarily mean you have to spend thousands upon thousands of dollars. Although, it’s easy to do nonetheless.

Over the next week I’d like you all to experiment with equalization and try to fix any problems and sculpt your sound. When you’ve got something down experiment with adding delay and reverb effects. I’m going to start discussing these items here shortly, but they become widely opinion based in their use. I will say that their main function however is to thicken sound and help place the track correctly in a stereo image where other instruments and vocals might also be present.

At this point the basics are starting to fall behind us. We’re getting into some territory where we are going to cover some more intermediate and advanced techniques and that means stepping up the game a little. I’m going to be navigating away from my laptop and firing up my own studio. I’ll be covering micing ideas and techniques, cables, signal flow and more high-powered editing.

Until next week, happy recording!